Is Data-Driven AI Brainwashing us all, or is it Just the Same as Good ol' Marketing?

The many claims made as part of the recent Cambridge Analytica & Facebook scandal, reviewed

This past month’s Cambridge Analytica scandal triggered some important conversations regarding privacy and the current uses of AI in social platforms. It is also another great example of how flawed reporting can create wide-reaching but inaccurate narratives about AI.

What Happened

This story started on March 16, with a seemingly naive blog post by Facebook’s VP & Deputy General Counsel Paul Grewal. The post announced that Cambridge Analytica is now suspended from Facebook and gave the first outline of the story from Facebook’s perspective:

- Alexander Kogan, a researcher at Cambridge University, built an app called ‘yourdigitallife’ back in 2013

- Kogan passed facebook profile data of anyone who downloaded it to Cambridge Analytica, which violated Facebook’s terms and conditions

- Facebook found this out in 2015, discontinued the app and asked for certification from all parties involved that the data was deleted.

- Recently, Facebook found out the data was not deleted in its entirety and then suspended Cambridge Analytica and published the blog post.

So far so good.

What Facebook didn’t tell us was that the blog post was a preemptive measure to influece public perceptions before the next day’s generous coverage of this story as told by Christopher Wylie, the whistleblower of this scandal and one of Cambridge Analytica’s co-founders. The Guardian and the New York Times ran some variants of the story, with the Guardian’s piece probably being the most notable. While the NYT quoted Wylie as saying that he built for Cambridge Analytica ‘an “arsenal of weapons” in a culture war,’ the Guardian took a more personal angle - describing Wylie as ‘clever, funny, bitchy, profound, intellectually ravenous, compelling. A master storyteller.’, among other lofty compliments. The Guardian also released a sleek video interview Wylie that has so far been seen more than 1.3 Million times on YouTube:

These articles brought some troubling new facts to light:

- Kogan sold the data to Wylie and his colleagues.

- Although 270,000 people downloaded his app, data of 50 Million people actually got to the hands of Cambridge Analytica, just by virtue of being friends with users who downloaded the app.

We now know it was more like 87 Million — a staggering quantity that would have been unthinkable before the internet age.

The Reactions

The story soon evolved beyond the facts of the ‘data breach’ to be about how this spooky new thing called personal data was used for the creation of a psychological warfare. Psychological warfare quite literally, in that the reports claimed it might have been a key factor in the results of Brexit and the 2016 American presidential election.

It led some, like François Chollet, to question and speculate about the nature of algorithm driven social media platforms in their current form. Chollet’s main argument is that while we worry about AI robots taking over, reality is already more troubling: AI algorithms such as the ones already used by Facebook can influence and perhaps even ‘brainwash’ users virtually to the point of mind control. By curating which bits of information we get to see, and selecting information that will be personally influential to us (measured by how much we react to them, and with which sentiment) it can alter our world view. Social pressure, AI edition.

“Integrated over many years of exposure, the algorithmic curation of the information we consume gives the algorithms in charge considerable power over our lives — over who we are, who we become. If Facebook gets to decide, over the span of many years, which news you will see (real or fake), whose political status updates you’ll see, and who will see yours, then Facebook is in effect in control of your worldview and your political beliefs.” -François Chollet

Another wave of reporting on the topic came at the heels of Mark Zuckerberg’s hearing at the American congress, which focused on the recent Cambridge Analytica scandal, as well as on earlier reports about Facebook’s involvement in the Russian election meddling. Perhaps appropriately for a story about social media and the internet, there were some less serious elements to the reporting such as all the informative and hilarious memes. The more serious reporting questioned Zuckerberg’s repeated claims that AI will fix most issues he was questioned about (primarily moderating hate-speech, fake-news, and innapropriate targeted political advertising). Most reports slammed Facebook for making that claim, explaining the AI cannot be relied on, that it will never solve facebook’s problems or that the whole idea is an excuse in an attempt to buy more time and nothing more. Sarah Jeong of the Verge claimed that placing hopes in the ‘AI will fix it’ mantra is not only running from responsibility, it will also be the basis of the next Facebook hearing.

Oh, and of course, #deletefacebook.

Our Perspective

The storm that has been rolling for just about a month now has many facets, but here are our main takeaways on just the AI aspects of it:

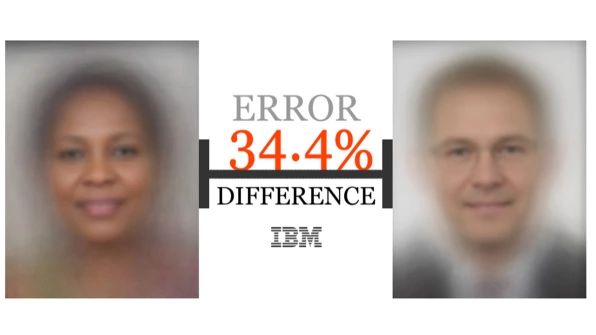

- AI isn’t mind controlling us just yet. By accepting Cambridge Analytica’s and Christopher Wiley’s narrative about the powerful psychological weapons they built we fall prey to their evidently effective PR. There is little evidence to the role their tools indeed played in the Brexit/Trump votes and we have little scientific evidence that targeted ads online can actually mind control anyone, as Chris Kavanagh excellently argued in his widely read medium piece. Was it really this infamous weapon that tipped the vote, much more than the FBI’s announcement regarding Clinton’s email probe just days before the election or the fundamental anti-establishment sentiments reeking in parts of US and Britain prior to the elections? Perhaps, but the evidence for this is scant. Besides, judging by the current state of targeted ads it looks like we’re technically still pretty far off:

Dear Amazon, I bought a toilet seat because I needed one. Necessity, not desire. I do not collect them. I am not a toilet seat addict. No matter how temptingly you email me, I'm not going to think, oh go on then, just one more toilet seat, I'll treat myself.

— Jac Rayner (@GirlFromBlupo) April 6, 2018

-

By pinning the problem on AI, or claiming that ‘AI will never solve the problem’, authors are making the same kind of sweeping arguments they pretend to be criticizing. No, AI will not save us all from everything. But AI is not a static one trick pony. These are by definition tools that get better over time and can be trained on different data with different objectives; we can and are becoming better at using them to address a wide variety of problems, including the moderation problem at hand. It will be an ever going battle between moderators and violators, and moderators should use all the tools at their disposal. We should, however, spend much more resources in an effort to develop more transparent AI and demand curating algorithms give us back control over what they’re optimizing for, as Francois Chollet rightfully suggests.

-

Much of the reaction to this story is emotional: we feel like we were betrayed by that friend we stalk our ex-s with, the one we ask for restaurant recommendations. Other platforms are not personal in the same way. The combination of the nature of this platform, and the end to which its data was allegedly used — some of the most divided, controversial votes we have seen in years — makes us all react more vocally than usual.

Above all, a productive conversation should not focus on smearing the tools or the technology; that’s too easy and too simplistic. It should focus on the values we would like them to reflect. We should also be mindful of the present-day limitations of the technology, even as we are aware of the possible of its possible eventual much more severe misuse.

TLDR

A breach of trust, not of technology, led to a scare about data accumulation, use for psychological warfare and claims about sinister/useless AI. The truth as always is somewhat more complicated. Tech giants should be much more transparent about future incidents and yes, employ AI as a tool in this battle, among other tools. And the brain washing algorithms are not here quite yet.