Why We Find Self-Driving Cars So Scary

Even if autonomous cars are safer overall, the public will accept the new technology only when it fails in predictable and reasonable ways

Image credit: https://gfycat.com/gifs/detail/RawSlimyGreatdane

The following opinion piece originally appeared in The Wall Street Journal on May 31, 2018, and has been replicated with permission here.

Tesla CEO Elon Musk recently took the press to task, decrying the “holier-than-thou hypocrisy of big media companies who lay claim to the truth, but publish only enough to sugarcoat the lie.” That, he said, is “why the public no longer respects them.”

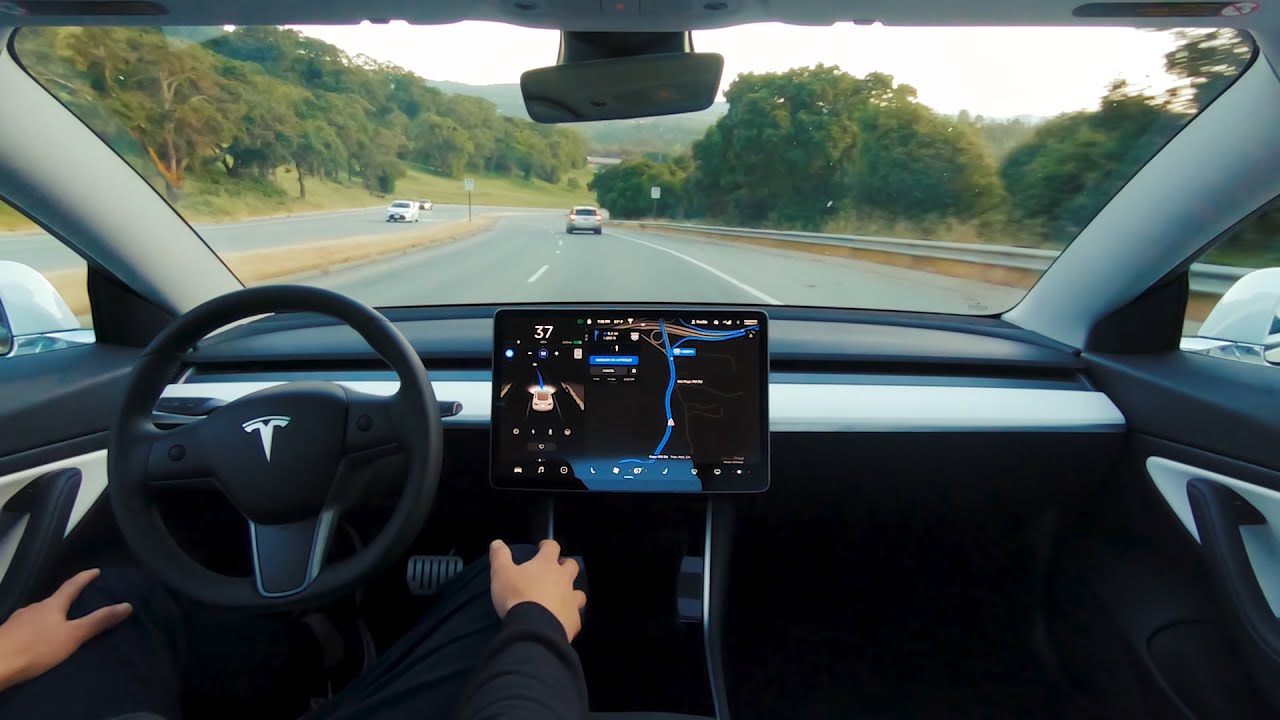

Mr. Musk’s frustration is fueled in part by widespread media attention to accidents involving Tesla’s self-driving “autopilot” feature. On the company’s most recent earnings call, he said that “there’s over one million…automotive deaths per year. And how many do you read about? Basically, none of them…but, if it’s an autonomous situation, it’s headline news…. So they write inflammatory headlines that are fundamentally misleading to the readers. It’s really outrageous.”

But not all accidents are created equal. While most experts on the subject (including me) agree with Mr. Musk that autonomous vehicles can dramatically reduce auto-related deaths overall, his criticism of the press betrays a misunderstanding about human nature and the perception of risk: Even if self-driving cars are safer overall, how and when they fail matters a lot.

If their mistakes mimic human errors—such as failing to negotiate a curve in a driving rain storm or neglecting to notice a motorcycle approaching from behind when changing lanes—people are likely to be more accepting of the new technology. After all, if you might reasonably make the same mistake, is it fair to hold your self-driving car to a higher standard? But if their failures seem bizarre and unpredictable, adoption of this nascent technology will encounter serious resistance. Unfortunately, this is likely to remain the case.

Would you buy a car that had a tendency—no matter how rare—to run off the road in broad daylight because it mistakes a glint off its camera lens for the sudden appearance of truck headlights racing toward it at close range? Or one that slams on the brakes on a highway because an approaching rain storm briefly resembles a concrete wall?

Suppressing these “false positives” is already a factor in some accidents. A self-driving Uber test vehicle in Tempe, Ariz., recently killed a pedestrian walking her bike across the street at night, even though its sensors registered her presence. The algorithms interpreting that data were allegedly at fault, presumably confusing the image with the all-too-common “ghosts” captured by similar instruments operating in marginal lighting conditions. But pity the hapless engineer who fixes this problem by lowering the thresholds for evasive action, only to find that the updated software takes passengers on Mr. Toad’s Wild Ride to avoid imaginary obstacles lurking around every corner.

Unfortunately, this problem is not easily solved with today’s technology. One shortcoming of current machine-learning programs is that they fail in surprising and decidedly non-human ways. A team of Massachusetts Institute of Technology students recently demonstrated, for instance, how one of Google’s advanced image classifiers could be easily duped into mistaking an obvious image of a turtle for a rifle, and a cat for some guacamole. A growing academic literature studies these “adversarial examples,” attempting to identify how and why these fakes—completely obvious to a human eye—can so easily fool computer vision systems. Yet the basic reason is already clear: These sophisticated AI programs don’t interpret the world the way humans do. In particular, they lack any common-sense understanding of the scenes they are attempting to decipher. So a child holding a balloon shaped like a crocodile or a billboard displaying a giant 3-D beer bottle on a tropical island may cause a self-driving car to take evasive actions that spell disaster.

As the six-decade history of AI illustrates, addressing these problems will take more than simply fine-tuning today’s algorithms. For the first 30 years or so, research focused on pushing logical reasoning (“if A then B”) to its limits, in the hope that this approach would prove to be the basis of human intelligence. But it has proved inadequate for many of today’s biggest practical challenges. That’s why modern machine learning is trying to take a holistic approach, more akin to perception than logic. How to pull that off—to achieve “artificial general intelligence”—is the elusive holy grail of AI.

Our daydreams of automated chauffeurs and robotic maids may have to wait for a new paradigm to emerge, one that better mimics our distinctive human ability to integrate new knowledge with old, apply common sense to novel situations and exercise sound judgment as to which risks are worth taking.

Ironically, Mr. Musk himself is a major contributor to misunderstanding of the actual state of the art. His ceaseless hyping of AI, often accompanied by breathless warnings of an imminent robot apocalypse, only serve to reinforce the narrative that this technology is far more advanced and dangerous than it actually is. So it’s understandable if consumers react with horror to stories of self-driving cars mowing down innocent bystanders or killing their occupants. Such incidents may be seen as early instances of malevolent machines running amok, instead of what they really are: product design defects.

Despite the impression that Jetson-style self-driving cars are just around the corner, public acceptance of their failures may yet prove to be their biggest speed bump. If proponents of autonomous technologies—from self-driving cars to military drones to eldercare robots—are to make their case in the court of public opinion, they will need more than cold statistics and controlled testing. They must also address the legitimate expectation of consumers that the new generation of AI-driven technology will fail in reasonable and explainable ways. As the old joke goes, to err is human, but it takes a computer to really foul things up. Mr. Musk would be well advised to hear that message before shooting the messenger.

Dr. Kaplan teaches about artificial intelligence at Stanford University. His latest book is “Artificial Intelligence: What Everyone Needs to Know.” Appeared in the June 2, 2018, print edition as ‘Why We Find Self-Driving Cars So Scary.’