IBM, Microsoft, and Amazon Halt Sales of Facial Recognition to Police, Call for Regulations

Movements against unregulated police use of facial recognition, which compound its many ethical issues, are gaining speed

Image credit: David McNew/AFP/Getty Images via The Guardian

Summary

- Recent protests in racial discrimination and police brutality led IBM to cease developing facial recognition technology and Microsoft and Amazon to pause selling face recognition technology to law enforcement.

- While this is a good starting point, as companies are not only acknowledging but addressing known flaws and biases in face recognition systems, much work remains to be done.

- In particular, continued pressure from the public is needed to ensure ethical developments and regulations of face recognition technology in the future.

What Happened

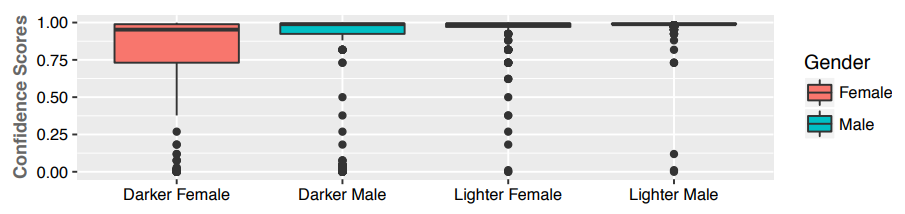

In a June 8 letter to members of Congress, IBM declared its opposition to technologies that would enable surveillance, including facial recognition. Microsoft and Amazon followed suit on facial recognition, respectively banning police use and placing a one-year moratorium on use. Other companies, such as Clearview AI, still offer the technology to police departments. As The Washington Post reports, IBM’s decision to drop facial recognition technology has been preceded by years of debate, particularly in response to a 2018 study called Gender Shades which found that industry facial recognition systems have much higher error rates on darker-skinned faces.

Civil rights and justice groups, including the Algorithmic Justice League and ACLU, have commended these actions from IBM and Amazon:

The Algorithmic Justice League commends this decision as a first move forward towards company-side responsibility to promote equitable and accountable AI.

It took two years for Amazon to get to this point, but we’re glad the company is finally recognizing the dangers face recognition poses to Black and Brown communities and civil rights more broadly.

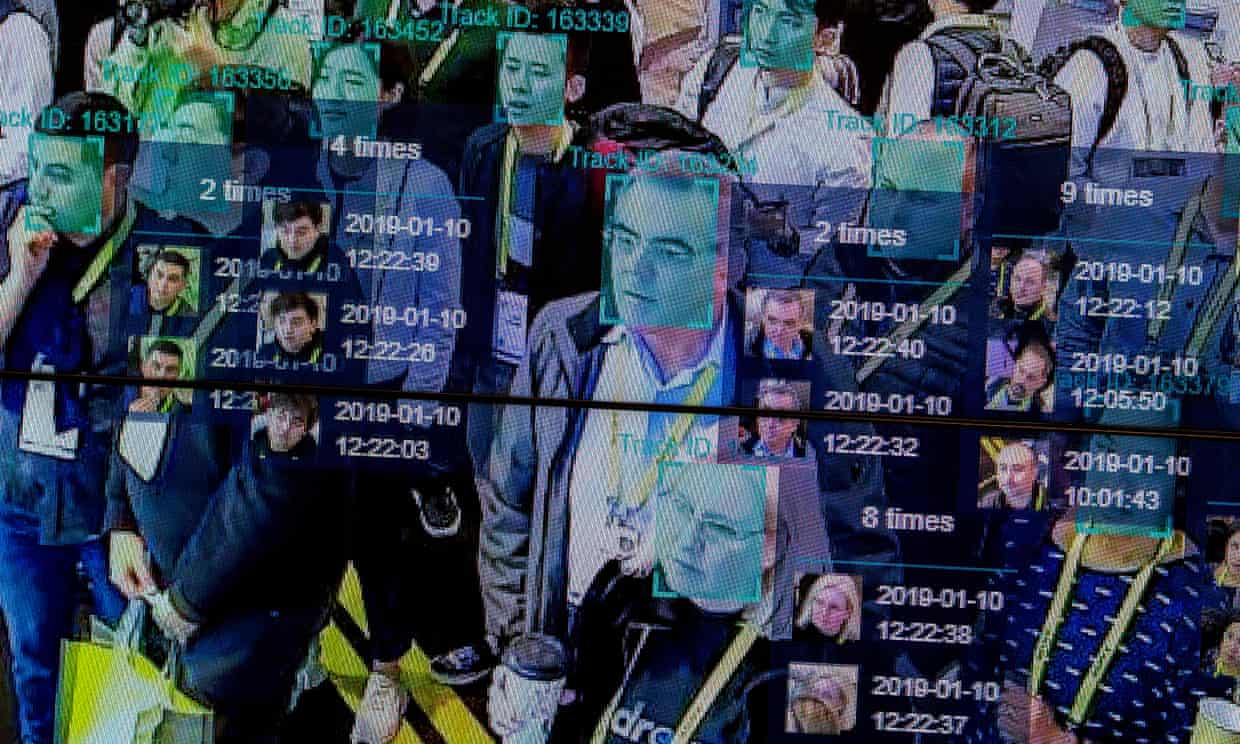

Facial recognition technology, capable of identifying a person from a digital image or video frame, has been under development for decades. With the major advances in deep learning over the past decade, facial recognition has also seen substantial progress. Modern AI-powered face recognition systems rely on large datasets of faces for two purposes: 1) training a neural network that can extract key features of human faces and 2) finding similar-looking faces using these features. There are multiple privacy and ethical concerns with collecting such large datasets, ranging from biased data that results in biased predictions to how these data can be collected without consent.

The recent controversies regarding face recognition are specifically centered around use by law enforcement. There are currently no federal laws, and few local ones, regulating how and when police and other government agencies can use such technologies. This is despite commercial offerings already being available for years, and being used by some police departments. When these flawed systems are applied by law enforcement, they can place people of color at higher risk due to the higher error rate. Even if these systems worked perfectly, they can still be “easily weaponized against communities to harass them.”

The Reactions

From the Press:

- Vox notes that public statements on facial recognition from such major companies is a step forward, but there are also concerns that smaller companies like Clearview AI may step in to take their place. Some are also skeptical of the recent moves by tech companies, calling them PR stunts:

Evan Greer, the deputy director of Fight for the Future, called Amazon’s announcement “a public relations stunt.” The Surveillance Technology Oversight Project, a New York-based anti-surveillance legal organization, called the company’s move “too little, too late.” Its director, Albert Fox Cahn, added in an emailed statement, “Amazon shouldn’t just end this practice for one year or one decade; it should end it forever.”

- Forbes reports that with the condemnations and moratoriums, there is enough conflation between the different sorts of technology that people aren’t quite sure what exactly is being renounced. Nonetheless, the public attention on face recognition systems is a good starting point to ensure ethical developments of this technology in the future.

This should then be backed up with a strong set of principles and ethical standards that the industry can follow. The result will likely be a stronger foundation for AI.

- Wired also believes that we are far from the end with facial recognition, agreeing with Vox that plenty of smaller companies are still there to fill the void–the technology produced by these companies is prey to the same fatal flaws that resulted in the blowback against IBM et al in the first place.

From the Experts:

- Some, such as Jathan Sadowski (research fellow at Monash U emerging tech lab), see the “victory” of pushback against facial recognition as merely symbolic and an opportunity to push harder.

Yes, I see this not so much as winning a battle, but as an opportunity to go on the offensive, to push harder, to actually extract real and lasting victories. We've all had fight so hard just to gain enough ground to strike back. And I'm finally starting to feel a bit surefooted.

— Jathan Sadowski (@jathansadowski) June 10, 2020

- Albert Fox Cahn described the step as a milestone, but “like hearing an arsonist agree to stop pouring gasoline on a forest fire, without doing much to put out the blaze.” \

I agree that’s a huge milestone, but it feels like hearing an arsonist agree to stop pouring gasoline on a forest fire, without doing much to put out the blaze, and I hope many see it as a sign of just how much more can be accomplished through even more pressure.

— Albert Fox Cahn🔯 (@FoxCahn) June 10, 2020

- Regardless, these steps, even Amazon’s one-year moratorium, are seen as a step in the right direction.

This is almost unbelievable! Nonstop activism + timing = Amazon has finally relented and is making a move in the right direction: implementing a one-year moratorium on the police use of its facial recognition tech, Rekognition! h/t @BrendaKLeong https://t.co/W5fWEaHtpY

— Evan Selinger (@EvanSelinger) June 10, 2020

From the Source:

After the announcements from IBM, Microsoft, and Amazon, Microsoft’s president repeated the call for federal regulation of facial recognition technology:

“We need to start teasing this issue apart, to understand it better and move just beyond a binary conversation of: permit it or ban it,” Smith said. “And think about: what is the right way to regulate it?”

Summary

- The enthusiasm for IBM’s, Microsoft’s, and Amazon’s moves on facial recognition have been mixed.

- Adding to the worries are the existence of other companies peddling facial recognition technology, such as Clearview AI.

- While many see these announcements as a small step down a long road, they do see it as a step in the right direction.

Our Perspective

Amazon and Microsoft have engaged in the same marketing of facial recognition that is being lambasted today. While Amazon’s contracts are already public knowledge, Microsoft was recently found to have pitched its facial recognition technology to the Drug Enforcement Administration (DEA) as far back as 2017. The recent announcements from IBM, Microsoft, and Amazon are a promising step forward in the fight against unfettered use of facial recognition technology, particularly in policing. There are strong arguments that due to the inherent difficulties in fairly implementing automation technology in law enforcement, police should be banned from using facial recognition altogether. In addition, as John Oliver pointed out, development of facial recognition in and of itself has opened a Pandora’s Box that even some in Silicon Valley were too afraid to touch.

In particular, the following immediate issues remain:

- Amazon is still engaged with the police in surveillance applications - Ring allegedly has partnered with over 1000 police departments in the US.

- Even if Microsoft and Amazon decide to go as far as IBM has in the moral sense, the fact remains that companies like Clearview AI and Banjo continue to offer facial recognition services. As long as there are people who want to continue to use facial recognition for any purpose, its development will go on.

- Without substantive federal regulations on such technology, no one company (or three companies) will have much of an impact on the development and use of facial recognition technology and the associated risks.

The current spotlight on the existing and potential abuses of face recognition technology is pressuring both industry leaders and governments to re-evaluate and re-imagine the future of face recognition. Congress is currently considering a police reform bill that limits face recognition in law enforcement. Many see this bill and the recent responses from tech companies as promising starting points. However, more pressure is needed to ensure fair and ethical uses of face recognition and other AI technologies with significant social impacts.

Conclusion

The moves by IBM, Microsoft, and Amazon to stop (or pause) development of face recognition technology, especially for law enforcement, at least until adequate federal regulations are in place, are promising starting points to ensure ethical uses of this technology. Although studies have revealed flaws in commercial face recognition systems for years, companies are only now taking action as a result of recent protests and the national conversation on racial discrimination and police brutality. This also suggests that continuous pressure from consumers, civil liberty groups, and the public at large is needed to ensure progress in the ethical development and regulation of face recognition technology.